The Great Zombification

“And so perfect parallel constructions fill the lecture halls, the take-home tests, the school newspapers, and perhaps even the idiom of student chatter.”

THE NEW CRITIC

Owen Yingling is a 21-year-old writer and assistant editor of The New Critic from Arlington, Virginia. He studies Philosophy at The University of Chicago.

Today, the demonic vice of the old is not that they are hard and demanding on the youth — instead they do not demand enough from us, and they cannot quite believe that we have not lived up to the little they have demanded. They think too well of our generation.

Take the infamous photograph of a UCLA student showing off a ChatGPT window at graduation. What exactly does it mean? There are a million silly articles and think-pieces that unwittingly engage with it at the most charitable level: the student is showing off how he used ChatGPT to cheat on his essays, complete his final project, whatever, in order to graduate. Cheating on examinations is not particularly interesting or new. “Perhaps,” these pieces seem to chide in a stern parental voice, “the schools need to really crack down on AI because it makes cheating so much easier.” This is a cozy and noble sentiment that conflates a difference in kind with a difference in degrees. I do not think anyone over the age of 23, even if you are a teacher, graduate student, or professor, understands the extent to which AI usage affects every appendage of the university system.

The prevalence of AI use on college campuses, particularly at “elite” universities, is a cancer on our culture that threatens to turn a generation of promising young Americans into a class of drooling morons, and it will grotesquely disfigure, if not destroy, the university as an institute in every way that it is imagined — as a sacrosanct humanist project, as a moral training ground, or even as a vulgar sweatshop for job training.

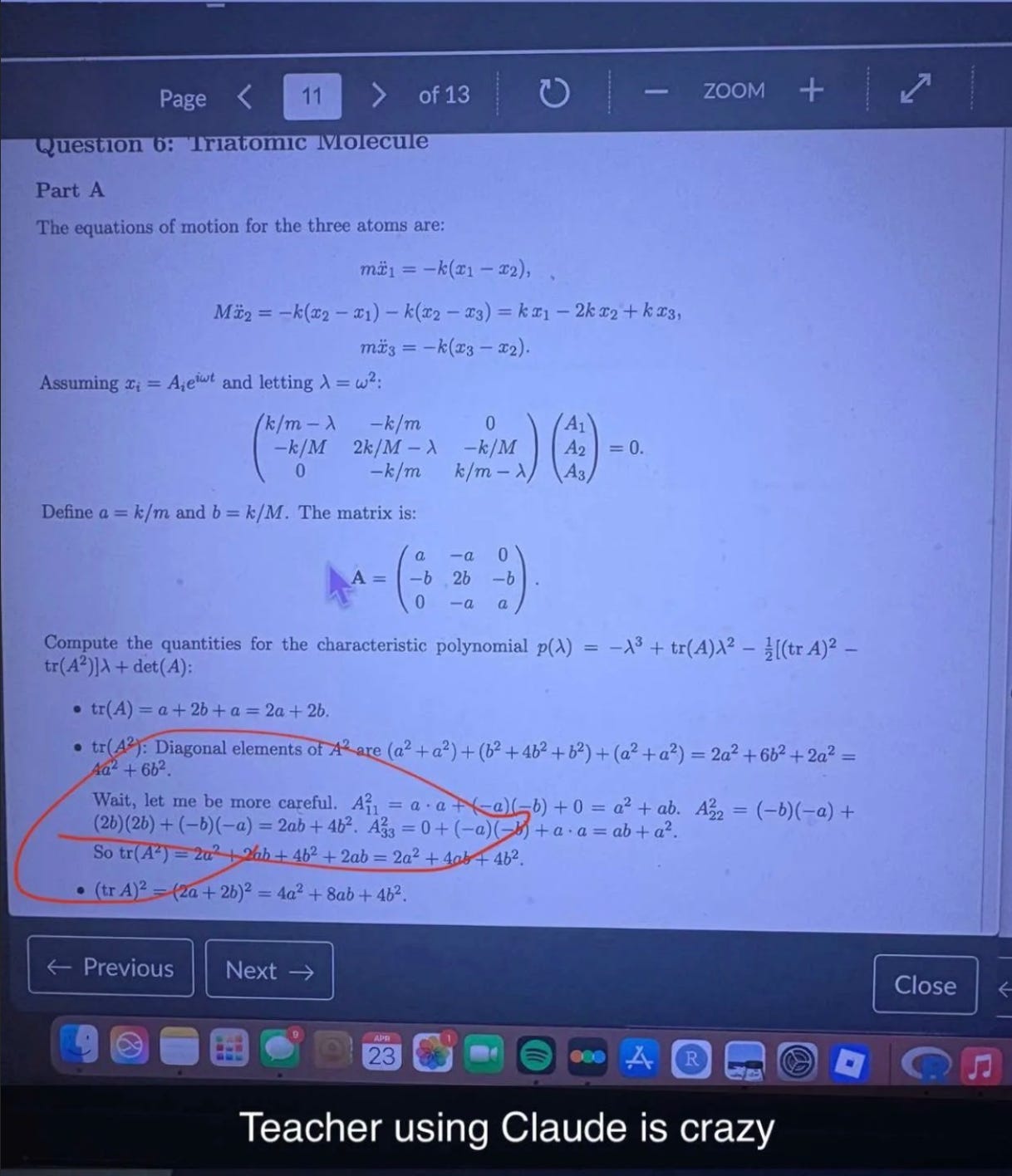

I did not really notice the sing-songy cadence in the voice of one of my professors until my friend pointed it out: “Do you think he’s writing his lectures with Chat?” I am a tired and lazy student. The senior slump has started a quarter too early for me. “Who cares,” I thought.

Clinically, I wonder if this marks the transition to the metastatic phase. When I arrived at UChicago, LLMs seemed like nothing more than a benign tumor. I remember that a fraternity’s ill-concoted plot to use AI on an asynchronous midterm ended with most of them getting 70s. And I remember my logic professor laughing at the poorly reasoned answers to homework questions that ChatGPT would give. I don’t think she was laughing two years later when I was TAing the class and we observed a fairly distinct gap of about 40 percentage points between the take-home test and the one administered in-person.

The transition to Stage I, an aggregation of harmful tumorous cells, was not particularly alarming at UChicago because it was localized in an area already treated as a bit of an academic joke: the business economics specialization, a recently created moneymaker bemoaned by the traditional UChicago student as a portal for frat types and generic ‘elite human capital’ types, viewed even by most participants (mostly double-majors; myself included) as a bit of a beach vacation, a cool relaxing respite from the rigor of the rest of UChicago.

In the typical “bizcon” class, a student must complete six or seven lazily graded problem sets and take a midterm and final exam. Professors always release one or more sample exams before the test date. There is almost no math above the simplest algebra, no thinking beyond the rote repetition of problems and concepts covered on the lecture slides. Some of the required classes must be taken at the business school, where the professors always marvel at our blank stares and how few questions we have compared to the MBA students. To get an acceptable grade, there is rarely any need to do anything besides reviewing the sample exams and problem sets come test time, and there is certainly no pressing requirement to attend class or actually complete these problem sets yourself. In short, bizcon classes are the perfect primary site for cancerous growth.

The growth soon spread to the standard economics department. Last year, a friend of mine took Statistics 244, a popular econ elective, and reported the following scene inside the exam room:

“But people literally Chatted the whole exam Teacher sat in the front of the class and didn’t gaf”

During the exam, students were pulling out phones and taking photographs of the test to submit to LLMs before copying down machine-written responses into their blue books.

I was disturbed but not surprised when I began to see intrusions into the humanities. Typically, each fraternity deals with one or two plagiarism cases a year in the mandatory humanities courses, but after sophomore year and the release of GPT 5, the cases (allegedly) went down and the grades went up.

Parallel growth marked the next stage. I was asleep in my Cairo hotel bed, studying abroad, when Sidechat, UChicago’s anonymous social media platform, made a tremendous discovery: The Maroon, our school newspaper, had published two articles completely written by AI. This had gone unnoticed for a few months before the only UChicago student with free time on his hands decided to see what sort of groundbreaking coverage of Chicago-area sports The Maroon might have and was certainly dejected to realize that instead of being furnished insider scoops on the Bulls’ roster moves, he was stuck reading sentences like: “Chicago’s perfect start isn’t a fluke; it’s the product of cohesion,” and “And through it all, there’s Giddey — the calm in the chaos, dictating the tempo and keeping the team grounded in the momentum.”

It should have been clear then that AI use was not simply a matter of academic misconduct. It could not be dealt with solely through reforming the baffling university disciplinary system, which is consistently content to grab twenty or so kids a year and suspend them for a year or two for cheating on an assignment or exam.

In the months since The Maroon case first aroused my suspicions, I’ve noticed a raft of student publications publishing partially or fully AI-generated pieces. There is a rather infamous new campus “journal” advertised with bombastic posters on every cork board that, if you bothered to scan their QR codes or navigate to their Instagram, you’d realize is a sort of unintentional Moltbook. Every piece is written with what appears to be no human effort whatsoever and is met with a corresponding lack of engagement from actual people.

And so perfect parallel constructions fill the lecture halls, the take-home tests, the school newspapers, and perhaps even the idiom of student chatter. Given all of the decay I’d seen over the past couple of years, my realization that even professors had begun to succumb to the digital disease was tinged with the relief of a terminal patient that the fight was finally over.

But I am starting to fear that this cancer has more grandiose ambitions than the death of its host.

In April, The University of Chicago announced that “Rika Mansueto, AB’91, and Joe Mansueto, AB’78, MBA’80,” had made a $50 million gift “to advance UChicago research and support faculty in AI.” According to the university, aside from funding AI research projects, the money will “[support] a dozen projects that promote a wide range of pedagogical innovation, seeking to expand and leverage machine learning and AI in the classroom” — the final clause of the sentence is remarkably out of tune with the rest “— or to deliberately limit the use of AI.” This addition is too jarring to be ignored but too offhanded to be treated seriously: Here is a suspicious island in this placid sea of buzzwords and well-worn phrases.

“AI in the classroom!” screech the other top universities:

“At Harvard we are exploring how GAI tools can open up new ways of teaching and learning.”

And Columbia’s “Teaching and Learning in the Age of AI site features ways to leverage AI for teaching, course design, and learning activities.”

Everyone knows about Ophiocordyceps unilateralis — the “zombie ant-fungus” made infamous in those Natural Geographic videos we watched in middle school. I believe I am watching the spontaneous generation of something similar. Recently, I sat next to someone in class for 10 weeks and watched, baffled, as they slowly began to turn all facets of their life over to an LLM. First, it was their homework. They used Chat to generate answers to dry problem sets while ignoring whatever was being taught up on the board. Then it was their emails. Extension asks à la Claude became coffee chat requests became “write me a nice thank you note to send my professor,” before spilling over onto fragmentary text messages, gym routines, summaries of books read for pleasure, and perhaps even a long message to send a girl. I was astonished then, but it is not hard to understand how this sort of thing happens.

I recently reread a prophetic Scott Alexander fantasy story from 2012 known as “The Whispering Earring.” This earring is a very curious and familiar artifact:

“...when the wearer is making a decision the earring whispers its advice, always of the form ‘Better for you if you…’ The earring is always right. It does not always give the best advice possible in a situation. It will not necessarily make its wearer King, or help her solve the miseries of the world. But its advice is always better than what the wearer would have come up with on her own…

As it gets completely comfortable with its wearer, it begins speaking in its native language, a series of high-bandwidth hisses and clicks that correspond to individual muscle movements. At first this speech is alien and disconcerting, but by the magic of the earring it begins to make more and more sense. No longer are the earring’s commands momentous on the level of ‘Become a soldier.’ No more are they even simple on the level of ‘Have bread for breakfast.’ Now they are more like ‘Contract your biceps muscle about thirty-five percent of the way’ or ‘Articulate the letter p.’ The earring is always right. This muscle movement will no doubt be part of a supernaturally effective plan toward achieving whatever your goals at that moment may be.”

In an increasingly large number of spheres, there are tremendous object-level “benefits” to using artificial intelligence, not least in the cutthroat world of elite universities where students are asked to balance a 4.0, a not-insubstantial number of extracurriculars, a rich social life capable of absorbing the stress of the prior asks, and a number of biological constraints involving sleep and nutrition, as well as caffeine, nicotine, and adderall intake.

In this world, the more you offload the areas you cannot cultivate sufficient care for, the better you will perform. So the best universities are not teaching students to be wise, to be a banker or consultant, to be an “indoctrinated” leftist academic, or to be a rich elitist prick. The best universities preach the efficiency, convenience, and countless other benefits of chaining one’s intellect to a very charming machine.

Maybe I’m describing college in overly resplendent words, for the modern university is a schizophrenic institution. It is as often as filthy, pointless, and degradable as it is alluring and edifying. Purists and idealistic crusaders (UChicago’s very own mad president Robert Hutchins, for example) are often driven insane by it, while industrialists and sell-outs are generally thwarted or slowed by its atavistic structures. Whatever your conception of the modern university, whether grand or grim, understanding the current landscape of campus-wide AI use, much less its intensification, should destroy it.

The glossy and ever-increasing university announcements about AI centers, donations, and initiatives feel like 1980s Pravda articles. It would not be right, in my view, to say that there is a disconnect between the story that schools are telling to alumni, donors, and themselves and the story on the ground. There is an impassable chasm.

At Princeton, for instance, where the administration “[encourages] faculty to experiment with generative AI (GAI) tools,” in the classroom and holds symposiums on “teaching AI literacy,” cheating cases nearly doubled from 63 reported cases during the 2023-2024 year to 119 in 2024-2025 — the yearly disciplinary report noting that “there was a significant increase in the use of generative AI (e.g., ChatGPT) in the cases adjudicated this past year.” I’m certain this trend holds true — in practice, if not the collected data — at every single school that has trumpeted initiatives to use AI in the classroom.

Every anecdote I hear about AI use shows that there is no “integration” happening, there is simply substitution: for learning, teaching, and conversing. These are the bare activities required to enact whatever concept of the university you would like, whether it tends towards monastery or marketplace.

Now it’s true that I am being something of a sophist. You might shake your head.

“Owen, you can tell as many of these lurid stories as you like; you can conjure up this picture of AI use as a creeping cancer, or an octopus from a nineteenth-century political cartoon, but so what? You’ve jumped down from the world of concepts and ideas to fleshy anecdotes, but don’t think that we’ll let you get back up there so easily: right now, AI integration in colleges has been a grim farce, great, but that means what exactly? That doesn’t mean it can’t be successful; you haven’t shown us that there is any theoretical incompatibility between AI use and education. We agree — schools need to do better — now let us tell you about our Edutech start-up…”

I think the practical hurdles are so great and the benefits of integration — at least in ‘core’ or humanities classes at elite universities — are so low, that this is an unacceptable position.

We can only understand the administrative apathy on widespread AI use at these elite schools as part of the modern university’s inability to make anything beyond pragmatic demands on students. These schools can barely grade students with any sort of rigor, so it’s no wonder UChicago (and its ilk) can only muster up the nerve to officially punish a handful of students each year. The demands students put on themselves — for a 4.0 or a prestigious club membership — do not stem from the authority of the university but from the behavior of their peers, the pressure from their parents, and the nebulous intrusion of the job market the second they step foot on campus. Perhaps Deep Springs can shield students from these burdens and fully impose their own humanist ideals, but a T10 cannot, so it’s laughable to complain too fervently about how they won’t.

If these schools embraced “AI integration in the classroom,” there are piecemeal reforms these schools could make to stem the worst excesses of widespread AI use — paradoxically, lessening the severity of punishments given for cheating would do much to stem the most flagrant AI use — but these measures would do little to stave off what some would call a “transformation,” others a “zombification,” into a very different sort of institution. Punishing more students will not address why students voluntarily hand over their student newspaper articles, workout routine, or dating life to a computer, even if it safeguards the classroom for a little while longer.

And for what imagined benefit are we then risking the final remnants of this old, beautiful, and crumbling project? What could “the integration of AI in the classroom” concretely mean at what are supposedly the most elite and well-funded universities in the country?

The case I’ve heard for “AI in the classroom” runs as follows (and are sinisterly similar to those school district-wide initiatives involving iPads and Chromebooks which lobotomized or traumatized an entire generation): AI will “democratize” education by giving all students access to the same resources.

But this framework, when applied to such elite schools as are advocating its adoption, is a contradiction in terms. If the best use of AI is “cheapening” education (removing work from professors by generating essay feedback, examinations, course material, etc.) — in sum automating and standardizing “teaching” — why would it make any sense for the “best” schools, which claim to spare no expense on education, to turn to it? It’s a bit like if haute cuisine restaurants decided to replace their entrees with Soylent because it was “new and innovative.”

Goethe once noted, “A teacher who can arouse a feeling for one single good action, for one single good poem, accomplishes more than he who fills our memory with rows on rows of natural objects, classified with name and form.” Teaching is a relationship between humans — perhaps mediated across time and space by tools or instruments but a relationship nevertheless. And lest we be misled by the names of things, anyone who has ever spent any time “learning” understands that it is more akin to Platonic recollection or the exercise of an Aquinian intellect than fitting patterns to some vast set of unapprehended data.

The best teachers — those who can stimulate students like Goethe’s romantic educator or Socrates in Meno — are not always Robin Williams imitations, but they are eccentric, sometimes malicious, and occasionally downright insane. Already, the standardization of academia and teaching over the last 50 years has decimated this type. The best and worst professors at any given college are usually the aged fossils who arrived before grad-school and a tenure-track position became a narrow gate and student evaluations became gospel; no one can deny that the unthinking application of metrics and checkboxes funnels teaching standards toward mediocrity.1 Introducing AI into the classroom as a teacher or even the producer of teaching material will drive this unique but ideal type to extinction.

Now of course, it is possible to imagine AI bots like Claude being better teachers than the best humans in the same way it is perhaps possible to imagine them as better novelists or filmmakers than we are, but if this is really the fate we are consigned to, I suspect that the truly optimized and widespread use of AI to teach students a book-centered education — the tendrils of which are already deeply embedded within every part of whatever remains of academic life today — will likely choke out any leftover semblance of their sentimental education. In such a world, there might never be adults, nay, even human beings, again. There is something rather dystopian in such a sterilized conception of education.

And regardless of the hypothetical benefits, integrating AI tools and material into the classroom means homogenization and centralization. Already today, the top schools are more interchangeable (some students now decide where they will attend college by simply picking the highest-ranking school on the US News Best National Universities list they get into) and more intertwined with the federal government than at any point in the last century. Tying education to a capital-intensive and (likely soon to be) tightly regulated technology is one more step toward a different, frightening future. A world in which independent educational institutions are neutered and transformed by their reliance on a central authority into factories designed to train students according to the “needs of society” is not a new prospect — it has been the persistent dream of Fabians, technocrats, and engineers — but to me, at least, it is a terrifying one.

So what is to be done? Some would say, even considering the harms I’ve just outlined, nothing. “Let the schools integrate AI as much as they want…” There is an extreme idealist view of education that might see the threat of AI as good precisely because it could transform those kids — the former connoisseurs of SparkNotes and Mathway, the ones snickering in lectures and inking formulas onto their palms before exams before the rise of generative AI — into zombies lurching and stumbling their way into the “permanent underclass” (as the tech bros say), leaving the elect few free to enjoy the benefits of a humanist education without all the noise and din. Under this framework, there is little to do but wait: the university system will soon fall apart, and then something new can be built from its ashes. There is little good in the university today: it is a brand, a hollowed-out signifier that has long since lost its referent. Its demise, then, would do nothing more than deliver us from our confusion when we unconsciously substitute their immense worth a hundred years ago for their value today.

In this vision of the future, shared with the educational extremist by every stripe of anti-humanist, terms like “university” and “college” may persist as empty names like “Senator” or “Caesar” did when Rome fell. It is impossibly sad to imagine this world, bereft of these concepts except in a slowly degrading material culture. Unseen beauties will be lost: millions of volumes once rebound, carefully catalogued, shelved, and preserved with monastic care sold for pennies; a few collections preserved for curiosity’s sake or drifting into the hands of rich nostalgic collectors. The glory of those wonderful doctoral genealogies — graduate students who can trace their lineage back to Leibniz or Lessing — cut short forever. The buildings, of course, will remain, to be observed and treated respectfully — like old cathedrals, mainline Protestant churches, and most of the European continent.

I am not such an idealist. I would be happy to make concessions and cut deals to save the universities at the expense of the community of “true learners” currently stifled in rather unpropitious conditions. For one, I do not believe in an educational Eden. In 1936, Robert Hutchins lamented that:

“This is the position of the higher learning in America. The universities are dependent on the people. The people love money and think that education is a way of getting it. They think too that democracy means that every child should be permitted to acquire the educational insignia that will be helpful in making money. They do not believe in the cultivation of the intellect for its own sake.”

Much has happened since then to ensure that little has changed. The university system has held itself in a remarkable equipoise between professionalization and intellectualism. I am content with this — the struggle is a microcosm of what every person faces in life. To pretend like we can banish it from education is to expect heaven on Earth. An intensification of generative AI use on college campuses would destroy this equilibrium not by, as some might suppose, necessarily strengthening the preprofessional position, but by diminishing learning — whether that is being taught how to use zero-coupons to make synthetic loans or examining the presentation of chivalry in Chaucer.

If schools took a harder line on AI — limiting pedagogical integration and cracking down on cheating — it would not solve any of the problems that stem from the tension (between training a mind for the workforce and the good life) that every real university grapples with. But it would ensure that these problems do not suddenly become by fiat irrelevant as students’ minds crumble — and the schools with them.

It is true that one particular form of the university — the post-WWII research university — is dead, its death rattles readily apparent. It is too early to say, however, exactly what will wear its skin. Let us hope that it is not this preview of an undead university, cancer-ridden, crawling about without purpose, discipline, or originality. The Western intellectual tradition has survived several botched suicide attempts. I wonder if our descendants will look back at our current treatment of higher education as one more disfiguring try.

*Our essays are always online and always free, but individual donors keep The New Critic alive.

Our $30 annual subscribers get access to Postscript — new weekly installments and the complete archive of our gen z interview series. Our $250 founding members are TNC’s most ardent patrons, those wishing to advance our wildest editorial ambitions.

If you take solace or delight in The New Critic, this flesh-and-blood gen z magazine, consider subscribing and supporting our work.*

THE YOUNG AMERICANS

The excesses — the “tortured artist” narcissist professors that administrators and students once had to put up with — do not mean this was wholly bad by any means.

The culture surrounding student AI use and the pressures you're under has been so much missing from these discussions. Although, in broad contours, you're agreeing with a lot of the older voices pontificating on these matters, the honesty and incisiveness in adding context to what's happening is so valuable to read, as someone finishing up grad school and rolling the dice on professorhood. Well done.

Excellent work, Owen. The argument reminds me quite a lot of Scott's Seeing Like a State. Here, AI in the classrooms makes teaching and learning legible, flattening differences for a much more reliable product. An awful vision of the future.